We might have lost the basketball game, but as a coach, it was one of the most inspiring performances I’ve seen from my team.

We had no business winning. Though we were good, this team was undefeated despite playing some of the other top teams in our league. As I watched our opponents move like athletic machines during warmup, I wondered if I was going to witness a bloodbath. But my team was undaunted. I could tell you about how we played physically, how we shot better than we had all season, how we kept the game close, and how we locked down on defense. But it would be easier to just say, “We played with belief.” If we hadn’t, we wouldn’t have just played average; the other team would have demolished us. We may have lost, but because my players believed in their skills and experience, they made it a much closer game than many would have predicted.

Belief isn’t just for sports. In my view, it’s a vital ingredient for creating change and making progress on some of the biggest problems we face. But this belief cannot grow out of nothing; it needs to stem from substantive data, reasoning, and knowledge. Otherwise, it’s easy to just sit back and let someone else solve our problems. The wrong kind of optimism is a liability, but the right kind propels us forward.

Getting the knowledge requires people doing the difficult work of digging through the scientific record, gathering data, and synthesizing it. It requires people like Hannah Ritchie, the Deputy Editor and Lead Researcher at Our World in Data, a non-profit that’s frankly a jewel of the internet for data exposition about the world’s problems.

As someone who has degrees in geoscience, carbon management, and global food systems, you might think that of course Hannah would be the kind of person to be optimistic. And she is, but that wasn’t always true. In her new book Not the End of the World, Hannah writes about her early universities years:

“In 2010 I started my degree in Environmental Geoscience at the university of Edinburgh. I showed up as a fresh-faced 16-year-old, ready to learn how we were going to fix some of the world’s biggest challenges. Four years later, I left with no solutions. Instead, I felt the deadweight of endless unsolvable problems. Each day at Edinburgh was a constant reminder of how humanity was ravaging the planet.” (Introduction)

She almost quit environmental science because doom dominated her worldview. After all, what’s the point in working in an area when you believe your efforts are futile? But then Hannah watched Hans Rosling present on metrics of human well-being, which changed everything for her. Rosling was showing how the world was actually getting better in many meaningful ways (such as the fraction of people living in extreme poverty and the estimated fraction of newborns that die before the age of five each year).

From there, Hannah began digging into the data more. She began zooming out, looking at historical trends instead of only reading the news. She took a job at Our World in Data, and her work over nearly a decade has led to this book.

Seamen hurry near the harbour, making final preparations for their vessel. They crunch through a blanket of red, orange, and yellow leaves. Their destination: Europe. A cool wind reminds them to keep working, anything to keep warm against the falling temperatures of autumn. It’s October 15th, 1657, and it’s departure day for the ship docked in the St. Lawrence River near Québec City.

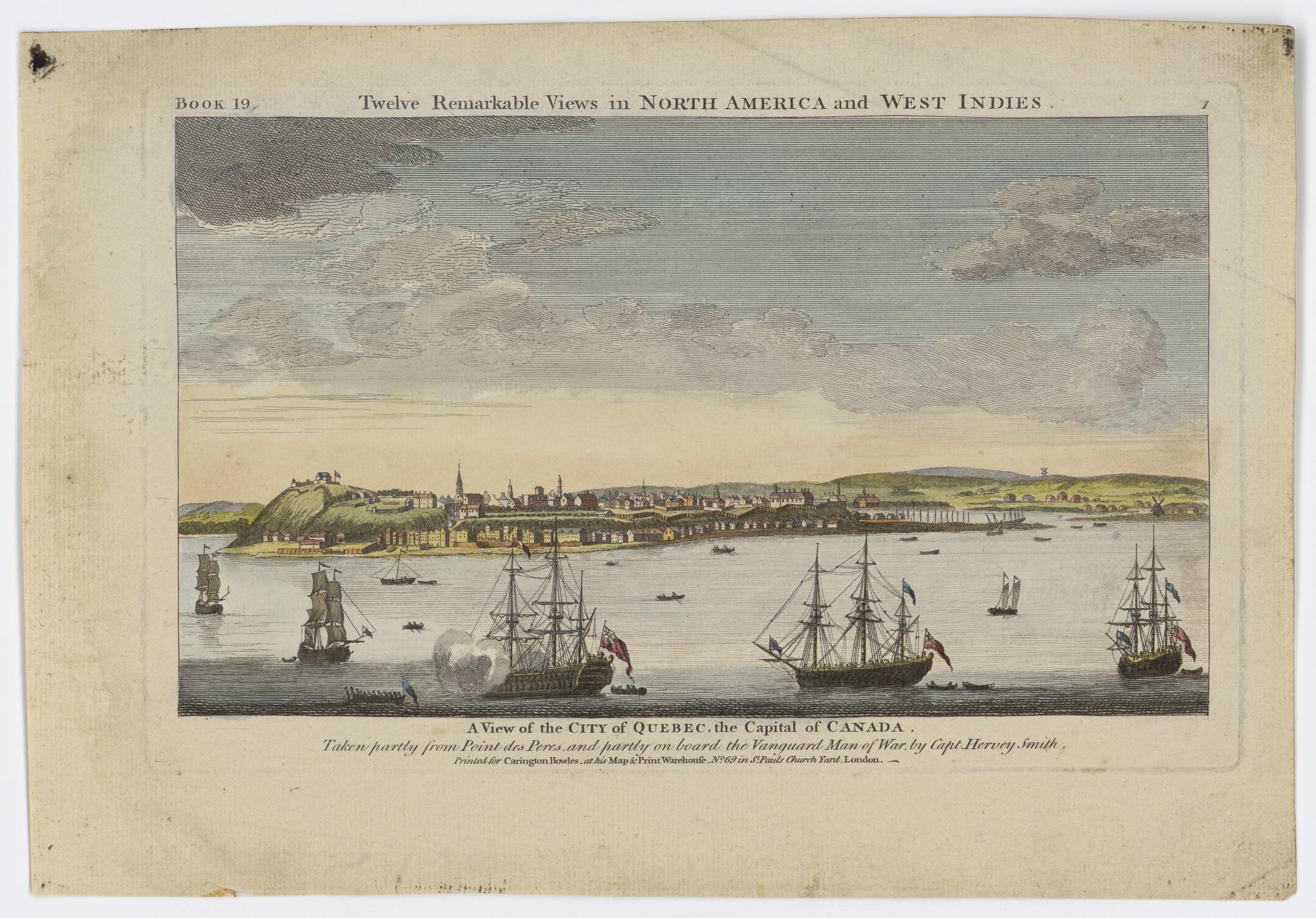

A view of Québec City around 1760, a hundred years later. Note the harbour and the small size of the settlement, even then. Created by Hervey Smith. Source

A view of Québec City around 1760, a hundred years later. Note the harbour and the small size of the settlement, even then. Created by Hervey Smith. Source

Today’s about more than just this ship, though. It’s the final outbound trip to Europe before the cold and ice arrive, making travel down the mouth of the river impossible. For the winter, the colonists in Québec City will have to be self-reliant.

Before they raise the anchor though, several colonists have approached the sailors, asking if they could carry their correspondence. This is their final chance to send any letters to their loved ones back in Europe.

One of these people is probably Marie Guyart, a founding member of the Ursuline convent here in Québec. As Jane E. Harrison recounts in the introduction of her PhD thesis (where I learned of this story), this is the final time Marie will be able to communicate with her son Claude before the ice closes her off from her homeland. After today’s letter, she will have to wait until the snow melts, the plants bloom, and the river thaws out before voyages begin anew.

The communication cadence between a colonist in Québec (New France) and a resident of Europe would be agonizing by our modern standards of email. “They’re taking _forever _to reply” wasn’t hyperbole but a fact of life. Remember: a voyage across the ocean took weeks or months, even during the summer. This delay increased the stakes of each letter, turning letter-writing into a sort of art form. There wasn’t any official postal service at the time to deliver mail throughout the colonies and Europe (though there were couriers and some individuals who would). Instead, people would often ask ships to carry their correspondence, perhaps paying them a small fee for the service. Postal organizations would only really begin in the 18th century.

Here in Québec, one big change occurred in the 18th century with the advent of a postal service connected to the burgeoning British colonies. As Harrison explains (page 2), people could continue writing through the winter by routing their letters south to New York, where ships could then leave for Europe. This development was progress in solidifying postal routes and a service throughout the colonies and enabling contact with Europe year-round. It was a slow process towards acceptance for people though, since they often could find others willing to do the job even more quickly. But over time, the postal services became the standard.

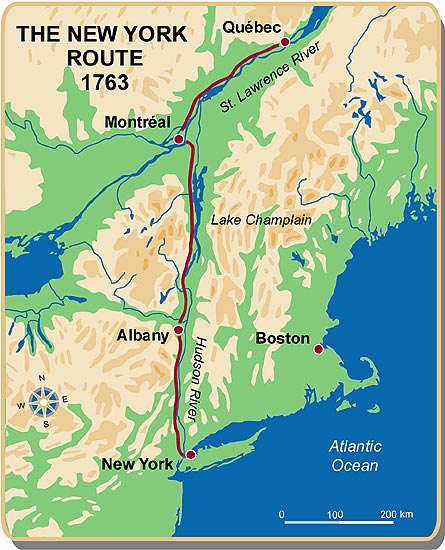

The route to New York. From the Canadian Museum of History (Andrée Héroux). Source

The route to New York. From the Canadian Museum of History (Andrée Héroux). Source

Steamships and railways helped propel the postal service into a faster and standardized system that people could rely on instead of entrusting their mail to random travelers (though there was still plenty of that). For example, when Canada ran its first railway line in 1836, delivery from Montréal to New York decreased by 5 hours (a 7% improvement). In my view, while technology was important, it was also important to establish regular shipping and railway routes that people could plan around. Communication delays decreased, increasing the connectivity of people who lived far away.

Still, correspondence is a far-cry from conversation. That’s fine if you’re placing an order. It’s less great if you want to keep in touch with a loved one who is far away. Electricity changed that. Over the last two centuries, humans invented and implemented the telegraph, the telephone, the radio, and finally the internet. Each one required time and effort to establish the infrastructure, but we gained enormous connectivity with people far away from us. These inventions also expanded our range of possibilities. The telegraph accelerated the post. The telephone and radio accelerated delivery of our voice. And the internet opened up the possibility for real-time video calls.

These days, I don’t think of physical space as a barrier to communication. I have friends who I’ve literally never met in the physical world. I’ve talked, laughed, and built relationships with them online, all because of our ability to harness the speed of light for our communication infrastructure. There are downsides to such a culture, but connecting with friends isn’t one of them.

If we take a broad view of history, we’ve increased our potential for sending messages across vast distances in a timely manner. Not all of the world is connected to the same degree, but most regions are more connected than they were in the past. What once could be a perilous journey across the ocean to deliver a message from a colonist in New France to Europe is now just a few taps and seconds away. This can create the illusion that connectivity is only an implementation issue since the speed of light is effectively instantaneous relative to distances on our planet.

Looking to a (hopefully not-so-distant) future in which we settle on other planets, how hard could expanding our communication network to encompass them really be?

The answer: very hard. The problem is that space is big. No, I mean really big. Just traveling to the nearest star at light speed would take over four years (and by the end, you would curse the laws of physics for such a sluggish cosmic speed limit). If we want to become a multi-world species, I wonder how the speed of light will fracture our conversations with loved ones during a trip, our calls back home when we move to new worlds, and our cadence of receiving news from other planets.

Many of us are used to hopping on a call with another person, talking in real-time. But we won’t be able to do that if we’re trying to contact someone on another planet or far-away station. It takes around twenty minutes for a light signal to travel from Earth to Mars, over half an hour for Jupiter, and over an hour for Saturn. And we’re still talking about the solar system here!

Distance is proportional to the time delay, but my enjoyment of a conversation is not. Anything up to a few seconds of delay is annoying but manageable. Ten seconds, though? I don’t think I’m going to want to have many of those exchanges. Electricity once bridged distant locations to turn correspondence into conversation, but becoming a multi-planetary civilization might force us back to a slower mode of conversation.

Imagine a young engineer traveling to Mars to begin her career. For the first few days, conversations with her parents remind her of just having a very laggy internet connection. After a week, they’re interrupting each other all the time due to roughly a five-second delay. After two weeks, she and her parents learn to pause for several awkward moments each time one of them speaks. After a month, the engineer begins reading a book while she waits for her parents’ reactions, nearly half a minute late. By the second month, she tells her parents they should simply start exchanging video messages.

Unless we’re communicating with nearby worlds, I suspect we will revert back to the cadence of email and letters, where we don’t expect a reply any time soon. That means no video calls, no online courses with synchronous sessions, and a lot more recorded video messages (or even just text).

I also wonder if it will make our worlds more isolated. After all, if I can only communicate with you through a delay, will I want to put in the effort to do so? Maybe, but I’d probably rather make friends with those on nearby worlds with a bearable time delay. Perhaps sending long-form messages with lengthy time delays will become an art form like it did for the colonists in New France. Or maybe we’ll all become experts at using asynchronous tools like remote workers currently use.

For official communication from organizations such as governments, I’m unsure what will happen. My guess would be that organizations will focus their scope around worlds they can rapidly communicate with. After all, you don’t want to wait on a reply for a time-sensitive issue because the head office of your judicial system is light years away.

The common theme: Our experience of time will probably limit the practical sphere of connectivity.

We’ve done really well at pushing our communication infrastructure to the limits of the laws of physics. Saturating them allowed us to temporarily convert correspondence into conversation. But when it comes to increasing connectivity in an expanding civilization, space always wins.

Note: I took some liberties in painting the scene surrounding October 15, 1657. However, the date and departure of the vessel do match the records. I learned of this fascinating account from Jane E. Harrison’s PhD thesis, “An Intercourse of Letters: Transatlantic correspondence in early Canada, 1640-1812”.

Thank you to Malcolm Cochran, Rob Tracinski, Heike Larson, and Camilo Moreno-Salamanca for feedback on earlier drafts.

Endnotes